The world of software engineering is currently grappling with a fundamental paradox of the AI era: as models become more capable, the “systems problem” of managing them has become the primary obstacle to real-world productivity. Although a developer may have access to the raw intelligence of frontier models, that intelligence is often degraded the moment a task requires a longer horizon or deeper reference window.

But help appears to be on the way: San Francisco-based, Y Combinator-backed startup Random Labs Is Slate V1 officially launchedWhat is described as the industry’s first “swarm native” autonomous coding agent, designed to execute massively parallel, complex engineering tasks.

Emerging from an open beta, the tool uses “dynamic pruning algorithms” to maintain context across large codebases while scaling output to enterprise complexity. Co-founded by Kiran and Mihir Chintawar in 2024The company aims to address the global engineering shortage by positioning Slate as a collaboration tool for the “next 20 million engineers” rather than a replacement for human developers.

With the release of Slate V1, the team at Random Labs is attempting to lead the way out of this space by introducing the first “swarm-native” agentic coding environment. Slate isn’t just a wrapper or a chatbot with file access; It is an implementation of a “hive mind” philosophy designed to enhance agentic functioning with the complexity of human organization.

By taking advantage of a new architectural primitive knitting threadSlate moves beyond the rigid task trees and lossy concatenation methods that defined the first generation of AI coding assistants.

Strategy: Action Space

At the root of Slate’s effectiveness lies a deep connection with Recursive Language Model (RLM).

In a traditional setup, an agent may be asked to “fix a bug”, a prompt that forces the model to perform high-level strategy and low-level execution simultaneously.

Random Labs identifies this as a failure to tap into “knowledge overhang” – the hidden intelligence a model possesses, but cannot access effectively when it is strategically overwhelmed.

Slate solves this by using a central orchestration thread that essentially “programs into action space”. This orchestrator does not write code directly; Instead, it uses a TypeScript-based DSL to dispatch parallel worker threads to handle specific, bounded tasks.

This creates a clear separation between the “kernel” – which manages the execution graph and maintains strategic alignment – and the worker “processes” that execute strategic operations in the terminal.

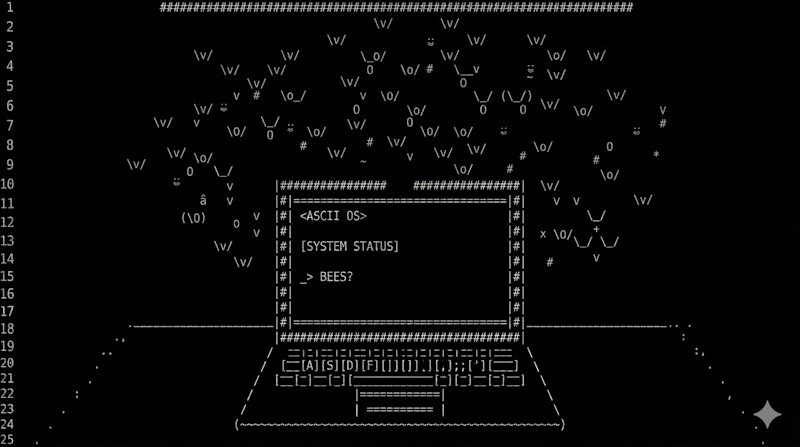

By mapping on an OS-style framework inspired by Andrej Karpathy’s “LLM OS” concept, Slate is able to treat a model’s limited context window as precious RAM, actively, intelligently managing what is kept in and what is discarded.

episodic memory and swarm

The true innovation of the “thread weaving” approach lies in how it handles memory. Most agents today rely on “compression,” which is often a fancy term for lossy compression that risks dropping critical project state. Instead Slate generates “episodes”.

When a worker thread completes a task, it does not return a detailed transcript of each failed attempt; It returns a brief summary of successful tool calls and findings.

Because these episodes share context directly with the orchestrator rather than relying on weak messaging, the system maintains a “swarm” intelligence.

This architecture allows massive parallelism. A developer can have Cloud Sonnet orchestrate a complex refactor while GPT-5.4 executes the code, and GLM 5 – favored for its agentic search capabilities – simultaneously researches library documentation in the background. This is a similar approach taken by Perplexity with its new computer multi-model agent

By selecting “the right model for the job,” Slate ensures that users are not overspending on intelligence for simple tactical moves while still benefiting from the strategic depth of the world’s most powerful models.

business of autonomy

From a business perspective, Random Labs is operating with a mix of transparency and strategic ambiguity in the early beta period.

Although the company has not yet published a fixed-price subscription sheet, the Slate CLI document confirms the shift towards a usage-based credit model.

Commands like /usage and /billing allow users to monitor their credit burn in real time, and the inclusion of an organization-level billing toggle suggests a clear focus on professional engineering teams rather than solo hobbyists.

There is also an important role towards integration. Random Labs recently announced that direct support for OpenAI’s Codex and Anthropic’s Cloud Codex will be released next week.

This suggests that the slate is not trying to compete with the native interfaces of these models, but rather to act as a better orchestration layer that allows engineers to use them all at once, securely and cost-effectively.

I have arrived

Architecturally, the system is designed to maximize caching through subthread reuse, a “novel context engineering” move that the team claims prevents the swarm approach from becoming a financial burden for users.

sustainability ai

Perhaps the most compelling argument for slate architecture is its sustainability. In internal testing, an early version of this threading system managed to pass the 2/3 test on the make-mips-interpreter task within the Terminal Bench 2.0 suite.

This is a task where even the latest Frontier models like Opus 4.6 often succeed less than 20% of the time when used in a standard, non-orchestrated harness.

This success in “transforming” or changing environments differentiates a device from a partner. According to Random Labs’ documentation, a fintech founder in NYC described Slate as his “best debugging tool,” a sentiment that echoes Random Labs’ broader goal: building agents that don’t just complete a prompt, but work at scale like an organization.

As the industry moves beyond simple “chat with your code” interfaces, Slate V1’s “thread weaving” offers a glimpse of a future where the primary role of the human engineer is to direct the minds of specialized models, each of which is working together to solve the long-horizon problems of modern software.